Announcing the 47 86 Foundation

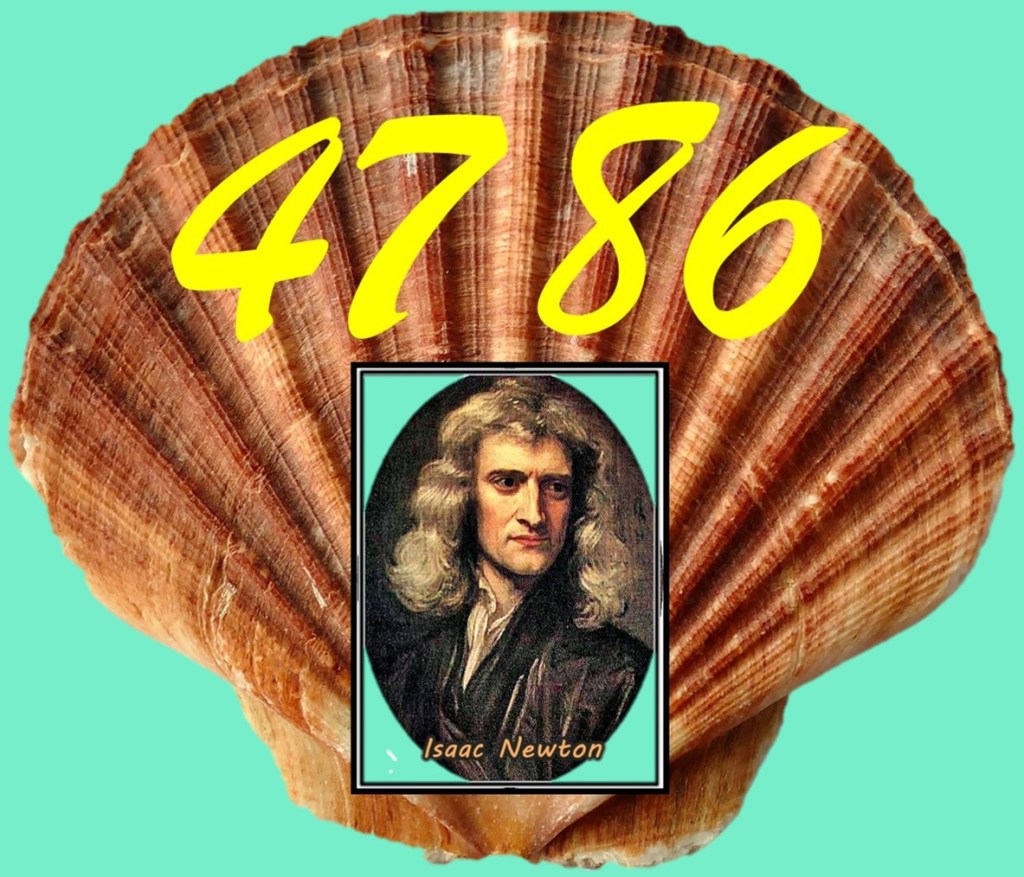

Today, I am announcing the formation of the 47 86 Foundation, a not-for-profit organisation headquartered at my home, which happens to be number 47 in the street in the village in which I live. The Foundation is designed to champion the works and impact of my alumnus, Isaac Newton, who attended the same UK grammar school as I did, albeit between 1655 – 1661, and went on present the manuscript of his ground-breaking acclaimed treatise, Philosophiae Naturalis Principia Mathematica (Book 1), to the Royal Society in April 1686 thus beginning the process of transforming our fundamental understandings of physics and mathematics. Coincidentally, the two 2-digit numbers in the name of my 47 86 Foundation are the same as those laid out artistically in seashells by former FBI Director James Comey while sitting on a beach in North Carolina last year:

I have chosen to reverse the order of my two numbers to avoid confusion and avert any suggestion that they contain a hidden implied threat to the 47th president of the USA, Donald Trump!

Applications to join the Board of this Foundation from exuberant mathematicians, jaded politicians, and zealous lawyers are invited.

PS. If you are unfamiliar with the fascinating account of James Comey’s grouping of the shells and Donald Trump’s subsequent accusation that the arrangement is a coded threat to his life, you can read the story here: https://www.bbc.co.uk/news/articles/cvgz4rvlem5o

(^_^)